Algebraic Neural Networks

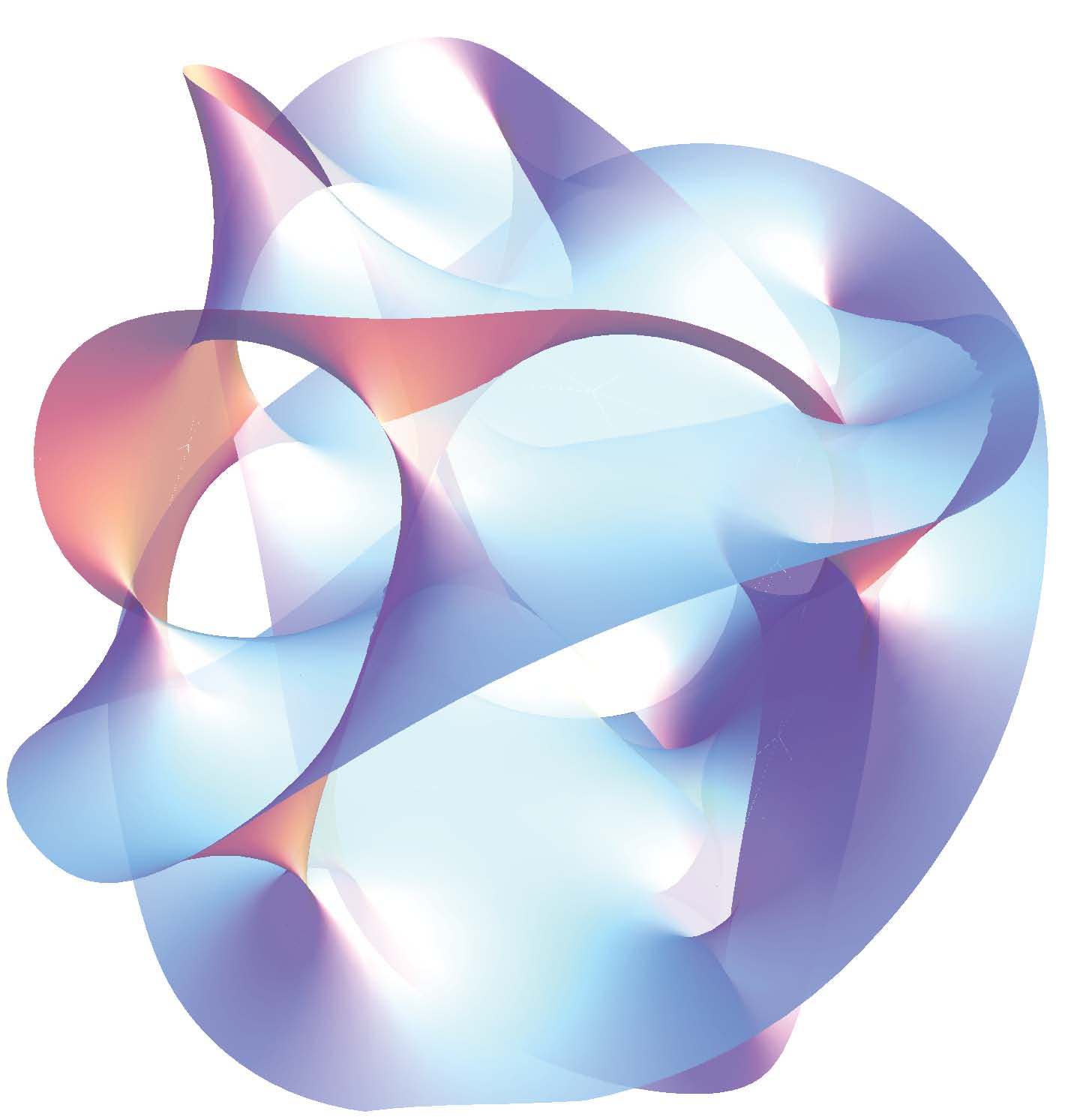

Alejandro Ribeiro, University of Pennsylvania, 12:00 ETAbstract: We study algebraic neural networks (AlgNNs) with commutative algebras which unify diverse architectures such as Euclidean convolutional neural networks, graph neural networks, and group neural networks under the umbrella of algebraic signal processing. An AlgNN is a stacked layered structure where each layer is conformed by an algebra, a vector space and a homomorphism between the algebra and the space of endomorphisms of the vector space. Signals are modeled as elements of the vector space and are processed by convolutional filters that are defined as the images of the elements of the algebra under the action of the homomorphism.

We analyze stability of algebraic filters and AlgNNs to deformations of the homomorphism and derive conditions on filters that lead to Lipschitz stable operators. We conclude that stable algebraic filters have frequency responses – defined as eigenvalue domain representations – whose derivative is inversely proportional to the frequency – defined as eigenvalue magnitudes. It follows that for a given level of discriminability, AlgNNs are more stable than algebraic filters, thereby explaining their better empirical performance. This same phenomenon has been proven for Euclidean convolutional neural networks and graph neural networks. Our analysis shows that this is a deep algebraic property shared by a number of architectures.

Further details in https://arxiv.org/abs/2009.01433 and https://gnn.seas.upenn.edu/lecture-12 .